Last year, I wrote about ransomware attacks (for the second time). I think there have been many since then but you do not hear about most of them. It can be absolutely devastating personally when you're the victim of one of these criminal attacks. The costs to an organisation or government are even higher. I think they might even cause a business to fail in some circumtances.

The recent news is that Marks and Spencer, a British retailer, has been hit by a "cyberattack", and it looks like it is ransomware. There's a write-up at Bleeping Computer and a few details are given. It is very hard to know how to protect yourself from this sort of thing, or deal with the aftermath. There are obvious protections but I think the defence is always behind the offence and it's increasingly trite to just push the messages to "patch your systems" or update your "antivirus".

It looks like something has been going on at the Co-op as well. A "hack"? It's a bit suspicious with all news coming out from them just now. The lead at the BBC article linked is :

Staff at the Co-op are being ordered to keep their cameras on during remote work meetings, and verify all attendees, as the company deals with an ongoing cyber attack.

Well ... great. But I recall that, not so long ago, a company was conned into doing a multi-million dollar transfer by criminals faking a voice on a telephone call (The Guardian) :

The British engineering company Arup has confirmed it was the victim of a deepfake fraud after an employee was duped into sending HK$200m (£20m) to criminals by an artificial intelligence-generated video call.

That is a lot of money. I think the "AI" is getting good enough to be on video now perhaps, so I think that all bets are off!

Addendum:

A Scottish man has been extradited from Spain to the USA apparently. Tyler Robert Buchanan is alleged to be a part of the nebulous cybercrime group "Scattered Spider", the people being blamed for the M&S cyberattack. Brian Krebs, who reports about this sort of crime writes about it.I bought a new keyboard and went with an HP 230 wireless model that includes a wireless mouse. It's inexpensive and only for use with in my living room, so not heavily used. I have not used it much yet but it seems fine so far. I would not want to use it for much "proper" typing though. The keys are "chiclet" style with little action.

There is always a "setup" sheet with this sort of thing: inserting the batteries, turning on mouse etc. It is always small with tiny text. Often not very useful.

But why can HP not also include a guide to what the keyboard "icons" mean? I'm familiar with many but some are a little mysterious. Technology "Icons" are supposed to make functionality clear.

I don't know what the "F1" icon means.

Or the "F2". Although F2 works as usual for "rename" in a file manager.

"F3" cut I assume. "F4" copy?

Anyway, it is such a small thing to document, why not do it? I could not find this information online.

I have been using a Logitech diNovoEdge keyboard in my lounge since 2009. It still works but has started to become a little unreliable sometimes and it loses connection for 5-10 seconds more and more often. It's been much used and I think it is a great keyboard for the lounge/TV. It is much better than any of the other wireless keyboard/trackpad keyboards I have tried: they are often poorly designed and also a low quality build. It is a real shame Logitech stopped making them years ago. Anyway, time to try something else.

I rarely think about using a spell-checking program: I've always thought I was fine reading what I wrote and fixing any mistakes myself. I can spell. But the other day, reading back a short blog post (again) I noticed a couple of errors I should have seen earlier. I could easily have "published" them. This made me think about a vim spell-checker.

It turns out there is a very easy way to do a spell-check in vim:

:set spell

This highlights words considered misspelled. There are keys to jump between them and

also to pop open a list of suggestions (see: :h spell).

A very useful tool on occasion. I must remember to use it before pushing anything out to the world!

If you use DHCP anywhere, you know that the server sends network configuration to you, the client, to set up things like your IP address, gateway, DNS server etc. An essential service today for most devices. What if you change something in the server and need to refresh these settings?

On Linux when using the NetworkManager program to deal with networking, you can easily release and refresh the configured settings using the NM command line tool nmcli. See what network conections are present :

nmcli connection show

Identify the network you want to refresh ("NAME") and switch it off ("down") :

nmcli connection down id "NAME"Replace "NAME" with the name of the conection (you will need quotes if there are any spaces in the name of the connection).

Turn it back on ("up") :

nmcli connection up id "NAME"

This should refresh your network configuration to reflect the server change you have made.

References:

Putting my "What I learned Today" efforts in the shade is Josh Branchaud's "Today I Learned" (TIL) repository of useful knowledge. He's been adding to this for quite a few years and it's a very impressive collection. It's on Github.

There is a more straightforward way to do this, plus a more arcane way (syntax-wise). There's a lot of arcane in the Bash Shell and in some ways, it's like Vim: you just have to get used to things, try and remember them and not ask "why?" too much.

If you have a file path and want to know the last part (usually the file name) only, you

can use the command basename (man basename) e.g.

If I have a path : /usr/include/stdio.h, then :

basename /usr/include/stdio.h

Gives me : stdio.h.

The command also has options to strip a suffix (e.g. the ".h" part).

Alternatively, a more arcane was is to use the parameter expansion functionality of the Bash shell.

FILENAME="/usr/include/stdio.h"

echo "${FILENAME##*/}"

This is looking for a pattern ("##") and stripping everything up to the last "/" (*/).

This also gives : stdio.h

Did I mention arcane? There are are lot of very useful features like this in the Bash shell but they can just be a little hard to remember sometimes (and you have to watch out for "gotchas" using them!).

Reference:

See Bash Manual : Parameter Expansion

In vim you can be in a visual selection mode, perhaps selecting only parts of the text file. Normally, a substitution operation (e.g. replace string "2022" with "2023") operates on a whole line (or range of lines). To restrict this to operate only on the visual selection you have, use the "\%V" pattern at the start of your search.

So, assuming a visual selection is highlighted and within this I want to change "-" to " " :

:'<,'>s/\%V\-/ /g

A highlight containing "This-is-a-test" becomes "This is a test".

See Vim help %V

Reference :

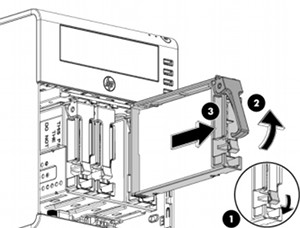

My server has USB 2.0 only and I thought I'd upgrade it to USB 3.0 via a Startech USB 3.0 PCI card. Installation was straightforward but after restarting the computer I discovered my networking was broken.

It turns out that my ethernet device used to be enp2s0 but was now enp3s0 and my network setup failed.

This type of kernel device name is created based on various schemes e.g. the physical location of the connector of the hardware on the PCI bus. See the Redhat Docs.

I've been using Linux for many years now and computers have changed a lot in this time. Leaving aside the huge advances in CPU, RAM and storage, many computer devices are not "fixed" in place but can come and go (even CPU's and RAM). Mostly, these devices might get plugged in or out, such as a USB mouse or external USB hard drive. PCI devices are also capable of being "hotplugged" and when any of this happens, the kernel has to scan the new configuration and determine what devices are present. Sometimes it has to re-arrange the device names.

My server is an old HP Microserver (N36L) : 12 years old now but still going strong (although it needed a new power supply last year). Because I use an external USB disk for backup, USB 3.0 will speed things up a lot (I hope). On to the reboot and networking failure ...

To fix this, I could just change my ethernet device name in my network setup files (i.e. /etc/network/interfaces on Debian). I decided to use systemd and create my own persistent and simple (old-fashioned) name for the device.

Systemd Link

This is "link" as in a network link (man page : systemd.link).

Use :

ip l

to get the MAC address for the network device enp3s0. Then create the file :

/etc/systemd/network/10-eth0.link

The file must end with ".link" and preferably begin with numbers (as a run order). It contains :

[Match] MACAddress=<YOUR MAC ADDRESS> [Link] Name=eth0

I edited my network setup scripts (Debian : /etc/network/interfaces) to use the network name "eth0". Now, when I boot the system, the name "eth0" is set on the network device with my main ethernet MAC address and will stay that way.

I must add that I like systemd and how it's changed the Linux boot and system control landscape.

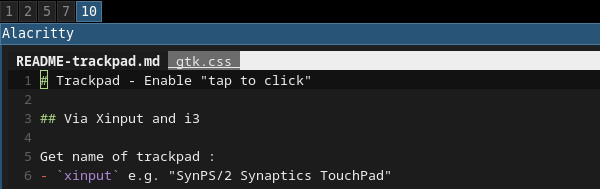

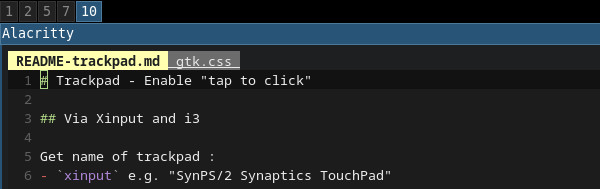

What I learnt Lately no. 2 : How to change the colour Vim uses for its tabs.

The editor Vim has "tabs" similar to tabs in other applications (text editors, browsers), or at least can be made similar. I use a plugin called buftabline for my Vim tabs. Vim buffers are shown as classic-style tabs along the top of the window.

One thing I didn't like was the fact that the empty space on this tab bar was shown as white :

To change this, use the "highlight" command and apply different colours to the tab elements :

That's much better and a lot clearer to see what buffer/tab I'm looking at with a glance.

This is what I have in my .vimrc settings file :

highlight TabLineFill term=bold cterm=bold ctermbg=0 highlight TabLineSel ctermfg=0 ctermbg=LightYellow

Note that the vim plugin buftabline has its own set of colour (highlight) groups e.g. BufTabLineActive (these are linked to the built-in tab groups). See the github page.

Reference :

This page was very useful :

I love tinkering around with the computer and digging into some of the aspects of running Linux, or the more interesting applications it can host. Take the editor Vim. Not a text editor for the masses (to say the least) but it does have an extremely broad and deep set of features and also a whole swathe of settings for them. I've not done much painting recently and have spent some time sorting out my computing experience. For this, I've learnt quite a few new things and it's made me remember how pleasurable learning stuff is.

So : What I have learnt lately a.k.a. WILL. Maybe I will try and document some of these things, if only for my own reference later.

Vim Lookaround

Viewing some old server backup logs (something else I've been "fixing"), I wanted to jump to the next line not starting with a word ("deleting").

I use the Vim text editor and to find a line that starts with a string, use the "^" regex anchor. Press "/" and :

/\v^deleting<cr>

The "<cr>" means press the return/enter key. So "^deleting" matches the word deleting at the start of a line.

The "/v" means interpret the pattern used as "very magic", so no need to quote brackets etc. See vimdoc for an explanation.

Now for the good stuff (and new to me) : to find a line not starting with "deleting", press "/" and :

/\v^(deleting)@!<cr>

This is similar to before but we wrap the pattern in brackets and end with "@!". The "@!" is a negative lookaround (i.e. "lookbehind") i.e. looks for no matching pattern behind us. The "^" means "at start of line" (behind us).

To help retain a piece of knowledge, it's usually useful to write it down somewhere.

Reference :

Following on from the DDOS Brian Krebs was hit with a week or so ago, he writes about certain Chinese "Internet of Things" companies :

I don’t normally think class-action lawsuits move the needle much, but in this case they seem justified because these companies are effectively dumping toxic waste onto the Internet. And make no mistake, these IoT things have quite a long half-life: A majority of them probably will remain in operation (i.e., connected to the Internet and insecure) for many years to come — unless and until their owners take them offline or manufacturers issue product recalls.

One of the many appalling things about these things is that many just cannot be secured at all. It's all smoke and mirrors : the web interface might let you change the default password, but this might not actually save it. Or there are other default passwords (for other routes into the system) that cannot be changed.

Some security experts are now coming round to the idea that the government might need to step in and mandates some fixes. The EU appears to be starting down this route now.

The future of work has been in the news off and on for a while, especially as AI and robotics start to make more of an impact. Lorry drivers are one profession said to be threatened by self-driving vehicles, but many white-collar employees are also at serious risk. For instance, computers can do very efficient legal discovery (and a lot more cheaply). We might start seeing higher unemployment, more underemployment, lower wages and the need to work much longer. Tornadoes weaving a path of destruction through the workplace?

A couple of years ago, The Economist magazine talked of :

Just as robots became ever better at various manual tasks over the past century—and were therefore able to replace human labour in a growing array of jobs, beginning with the most routine—computer control systems are able to handle ever more of the work done by human administrative workers. Jobs from truck driver to legal aid to medical diagnostician to customer service technician will soon be threatened by machines. Starting with the most routine tasks.

The article above starts off quoting David Graeber on his labelling many jobs as "bullshit jobs" (Strike Magazine), massive swathes usually administrative jobs in areas like health administration, human resources and public relations. A very different type of economy from the "classic" one of people designing and making things. I might argue that this has really always been the case however, at least since the Industrial Revolution.

David Graeber (author of Debt: The First 5000 Years and LSE Professor) :

While corporations may engage in ruthless downsizing, the layoffs and speed-ups invariably fall on that class of people who are actually making, moving, fixing and maintaining things; through some strange alchemy no one can quite explain, the number of salaried paper-pushers ultimately seems to expand, and more and more employees find themselves, not unlike Soviet workers actually, working 40 or even 50 hour weeks on paper, but effectively working 15 hours just as Keynes predicted, since the rest of their time is spent organising or attending motivational seminars, updating their facebook profiles or downloading TV box-sets.

Maybe the French have a word for this :

I was at the V&A on Sunday looking at some ceramics and glassware. The sixth floor was almost empty, apart from me and three or four other people (later). But there are still guards around, all day, every day. Lots of interesting things to look at and read, but the job seems to be very very dull.

Some glass ornaments and sculptures were extremely striking :

Above: Deep Blue and Bronze Persian Set by Dale Chihuly, 1999.

From the museum label :

Originally reminiscent of the tiny core-formed bottles of ancient Egypt and Persia, the 'Persians'

series was begun on 1985. Since then Chihuly has developed the series into a range of different

shapes , the outer ones often of enormous size.

My laptop of choice has always been a Thinkpad, firstly as made by IBM and latterly by Lenovo. I own an X220 (and an older X60s, still a wonderful little machine), and even though it's a few years old now it's still a great laptop.

One of the big reasons I'd still buy a Thinkpad is their build quality. Also, if you need to do any maintenance on the system (e.g. upgrade RAM, swap the mSATA SSD), the documentation is very good (much better than Dell's for instance).

People often enthuse about the build quality of Apple laptops, but I'm not willing to spend money with Apple. And even if I was, it doesn't seem such a good idea to replace Mac OSX with Linux. Linux generally runs very well on the Thinkpad.

Currently with Debian "Jessie" (Testing) installed and the i3 tiling window manager. It's very refreshing not having all the desktop clutter around. Not really any desktop at all in fact.

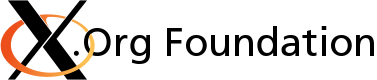

Another recent Adobe Flash security update has me at the Adobe site again, trying to remember the update process for Linux. I use the 64 bit version and have to un-tar the archive and copy the .so to the right folder.

Adobe stopped shipping new versions of Flash for Linux a while back, but promised to keep the Linux versions updated for security (Thanks Adobe). But it's still odd seeing the different versions available for the other platforms.

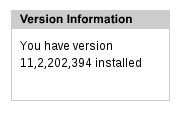

I have :

Windows, Mac and Google Chrome have :

Note that the version Adobe say is the latest for Linux is wrong - 378, compared to what I just downloaded and installed - 394. Who knows? Security is hard, as is updating web pages!

Not a fan of Flash and I look forward to it disappearing. But I'm even less a fan of computer security problems, and especially the people that inflict them on us. So keep your software up to date!

It's been a very long time since I've played around configuring X Windows on Linux but I've recently had the "pleasure" again. X mostly just works now, and there's no more need to fight monitor modelines or an arcane xorg.conf file (the file usually doesn't even exist anymore). This is a very good thing because X setup was sometimes a nightmare.

For the past few years, I've done well to steer clear of proprietary graphics drivers as well, drivers for hardware like ATI/AMD or NVIDIA. I've choosen Intel graphics hardware because Intel's writing good open-source drivers. If I happen to be using ATI/AMD or NVIDIA hardware, I try and use the nouveau or the radeon driver.

X was the only big component of the Linux desktop stack that I never compiled from source, back in the mid-1990's (when X was "XFree86"). Too scary, and perhaps my 486 CPU, 128MB RAM and 33.6 baud modem weren't so up to it.

Not my graphics card

When I bought a cheap(ish) laptop to use as my "desktop" (TV/HDMI connected) a year or so ago, I hit a small snag in that it's based around an AMD chipset for graphics (and HDMI audio) and an Atheros for ethernet. I had to download, compile and load an out-of-tree Linux kernel module to get the ethernet working. Very 1990's.

An out-of-tree module means you have to remember to rebuild it if you ever upgrade your kernel. Luckily, the ethernet module (alx) is now "in-tree" from 3.11 and I'm using Debian Wheezy backports.

Generally, all's been well. I don't play computer games so have no need for fast 3D, just decent 2D performance. I'm not sure what happened but a while ago I noticed that full-screen desktop video had got very choppy (tearing). The desktop felt "stickier" than usual. So, I decided to (perhaps foolishly) try the proprietary AMD Catalyst driver and see if things are better.

Past experience with graphics driver updates on Linux have been varied, to say the least. I recall painful times and black screens, but this was a long time ago and I have much more experience and confidence now. This stuff is generally still a bit of a black art if things don't work out though!

Got the Catalyst 13.12 release driver installed and working, after a bit of messing around. A bit more playing with xrandr on the laptop to sort out display outputs to laptop, TV and/or both. Made sure I was using kernel 3.11 from backports as well and that the AMD driver supported this. Result? Video playback seemed good now and all appeared well (plus it didn't take long). Success! Or so I thought ...

A Key Problem

A problem quickly manifested itself during the week: key press delays in X applications.

Pressing a key in the web browser search box (for instance), would exhibit 1/2 second delays occasionally. Maybe 1 or 2 seconds sometimes. Consoles were fine: at least konsole and rxvt. Laptop keyboard directly or USB keyboard showed the same issue ... very annoying.

So, on the merry-go-round again ...

To investigate, I planned another look at X for a Saturday. This time I updated the kernel to a new backports version 3.12, and downloaded and installed the AMD Catalyst driver 13.11-beta (which said it supports kernel 3.12). A bit of trouble :

- I had to do a force install because the previous AMD driver didn't want to --uninstall.

- I had to do some symlinking to link libGL.so.* from /usr/lib to /usr/lib64 (not sure why this was wrong - poor AMD/ATI Debian x64 support?).

But still the same key press problem ...

Key presses worked fine with the radeon driver but not the AMD driver. So I started to look at X Server options, starting with the "easy" stuff via the memorably named amdcccle, the AMD graphics control centre (a graphical application).

I enabled the "tear free" control (sync to vertical refresh), which was off, and this seemed to fix my key press delay trouble.

Apparently, I could have used the following command to enable this as well :

aticonfig --set-pcs-u32=DDX,EnableTearFreeDesktop,1

Thank goodness for that!

At the end here, I was going to start up the control centre and get a small screenshot of it. But it gave me a segmentation fault ... ugghh.

X Windows is due to get a replacement in Wayland at some point in the future. I can't say I'll miss it. In fact, there are a few quite exciting developments happening in desktop Linux-land just now so it should be an interesting year or two.

As much as I liked the Firefox OS phone, I've stopped using it and bought a new Motorola Moto G Android phone.

The ZTE is just too slow and I found it increasingly painful to use. I hope to see Mozilla get their mobile OS on better hardware and, at that point, I'd have another look. For the money paid (£65) it's no great loss and the Moto G (at £160) is an amazing phone.

There'll be no FF OS updates from v1.0 here it seems, and a last straw was discovering my bluebooth headphones won't work with it: extremely minimal bluetooth support. Couple this with a slow touchscreen, sometimes needing multiple presses to get a response, and then a few complete freezes and I've given up. For me, attempting my own OS builds doesn't seem a reasonable thing to do.

The Motorola Moto G is a new "Google" phone and runs (almost) stock Android 4.3 (upgrade to 4.4 soon apparently). I haven't used Android on a phone since 2.2 (Cyanogen) and the changes are huge. It's an extremely polished interface, the whole thing looking and feeling great. It's fast, has a great screen and seems to have very good battery life as well. I am very happy with it.

Less satisfying is that MTP, the "Media Transfer Protocol", doesn't work very well on Linux. Ironic this is so bad, considering a) Android is based on Linux and b) Google do a lot of work using (and engineering) Linux. Go-mtpfs seems to work on my desktop (manual mount, fine) but not on my X220 laptop (this morning). MTP support seems to be fragile, spotty and therefore quite annoying!

I've never been happy with relying on a cheap ADSL router/modem for firewall security on my home network, but this is what I've been doing for a long time now. How secure is its firmware, does it get updates? Control and configuration is often poor.

Basically, a little "white" box running [1] who knows what.

[1] Usually some version of Linux, often old and perhaps with "patches". Security updates either non-existent or hard to find.

So I bought a cheap but much superior solution from LinITX: an Alix based device running the pfsense firewall.

This runs FreeBSD wrapped up in a very nice web-based GUI to manage a pretty sophisticated firewall/router. A little red box running a known quantity.

What this means in the first instance is I've had to familiarise myself with firewall rules and logs again, something I've not done for a long time (when I used to run one or two Linux firewalls). I've set the box up to be a perimeter device and also plugged in my WIFI as its optional interface. Staring at logs and trying to tweak rules to minimise logs, in some cases scratching my head over odd packets, or hard to hide logs ...

As a slightly paranoid system administrator, the easy availability of firewall logs and rules can keep me up a bit later than usual now.

Intersecting this interest was a report I saw about a test Channel 4 TV are doing just now called Data Baby. They are monitoring the information mobile phones are sending out, which turns out to be a lot, even when they're doing "nothing".

As the phone sat, apparently silent, contacts were in fact being made with 76 different servers around the world, in countries from the US to Europe to China and Singapore.

Mr Miller said: "the interesting thing is, and (it) might be surprising to a lot of people is, that (the) phone is always active. It always has an internet connection, and so the applications, if they choose to, can continue communicating after you've put it down."

My Nexus7 Android tablet sits in my kitchen and I sometimes use it for streaming radio (Tune In), Skype or browsing. It's idle for most of the time, but there is constant traffic to Google's servers, and even the BBC. I haven't captured the traffic to look at it in detail, no doubt it's all quite innocent and normal. But now we all carry these little networked multimedia computers in our pockets, do we need to have some assurance on what information it sends out? What is your phone doing? What permissions do you give an application when you install it?

Not only the phone. Recent reports detail how LG televisions might be logging information about files you are using and sending data back to the manufacturer. Spying basically. Not the sort of thing people would expect of a TV, but all of a piece when consumer electronics converge to be multimedia networked devices. Time to get the wire sniffer out ...

I own an HP ProLiant MicroServer, a great little box I bought a couple of years ago to act as my main file server/NAS machine. It's held up very well and it was very cheap because I got £100 cashback in a deal (and it was cheap already).

It's not a powerful computing machine by any stretch but a very decent server: I've put 8GB RAM in it and 4 2TB disks in RAID5. It's also very decently built by HP, with some care and attention you'd except on a bigger server. Hence the Proliant badge.

One reason I prefer it to my QNAP T419P is that it's got a display connector. The QNAP is serial only, so a bit more fiddly.

To maximise the available storage capacity, I installed Debian on an 8GB USB stick and use the 4 hard disks for the RAID only. Generally, this has been fine, but I have started noticing some fairly severe I/O latency hits recently and this has started causing more frequenet pain elsewhere. Combine this with some USB filesystem corruption a few weeks ago and I wanted to switch away from this configuration.

However, I also learned that the stock HP BIOS does not enable all the system features, including a "spare" SATA port on the motherboard, supposed to be used for a DVD or CDROM. Without another SATA port, it's impossible to add another drive for the OS.

Luckily, I came across a great web page by Joe Miner describing how to update the HP BIOS and enable these hidden features. The usual caveats apply: this is not an officially sanctioned "update" (in fact, it isn't adding anything, but "un-hiding" things. The version remains the same).

Having done the update, I now have an extra motherboard SATA port and have also made all the ports default to 3Gps. I've also stuck a spare 2.5" SATA hard drive in the empty CDROM space.

With this extra disk installed, I used debootstrap to install a new version of Debian on the disk and configured this new install, adding boot loader etc., while the "old" system was running. On Sunday morning I rebooted into the new system, fixed up a few missing bits and pieces and now have a brand new OS installed on a proper disk. So far, so good.

Computers can be pretty frustrating, even when you think you understand them fairly well. This understanding might make things worse in some ways, as you'll go the extra mile, persevere a bit longer, do the extra debugging and perhaps end up no better off (except even more frustrated).

What's brought this about? Well, over and above the usual nitpicks :

Mozilla Thunderbird IMAP Issues

My domain email stopped working a few days ago. Initially I thought it was just an email dry spell, but some more concerned digging showed a problem connecting to my IMAP server (dovecot).

Some potential complexity here ...IMAP itself but especially the SSL layered over it (imaps). So a fair amount of anxiety about what might have been broken - server update? expired certificate? problem ertificate? or a problem on the client computer, or client mail application?

Suspicion settled on the client application, Mozilla Thunderbird, and I went through a slightly painful process of regressing some major releases and finding that version 23.0 broke things.

Somehow I had managed to get through v23.0, 24.0 and 24.0.1 via the automatic updates with a working mail capability. At least until last week. I am not sure how!

Posted some notes and asked for comment on mozillaZine, and then ended up logging a bug. Should have expected this, but then tasked to find the nightly regression point, a potentially painful process. "Luckily", being on holiday meant I have had some time to do this ...

Mozregression didn't seem to work well for me, not finding any break point, so I took the manual route of downloading some releases close to the last version that worked for me (release 22.0) and seeing where it failed :

2013/05/2013-05-23-00-40-20-comm-aurora/ ----- BAD

...

2013/05/2013-05-20-00-40-04-comm-aurora/ ----- BAD

...

2013/05/2013-05-16-00-40-19-comm-aurora/ ----- BAD

...

2013/05/2013-05-14-00-40-02-comm-aurora/ ----- BAD

2013/05/2013-05-13-00-40-21-comm-aurora/ ----- OK

2013/05/2013-05-12-00-40-18-comm-aurora/ ----- OK

...

2013/05/2013-05-06-00-40-01-comm-aurora/ ----- OK

...

2013/05/2013-05-02-00-40-01-comm-aurora/ ----- OK

So, IMAP to my domain broken with the 2013-05-14 build. Let's see how things go.

- Bug : 930878

IMAP with SSL/TLS,normal password fails to retrieve mail after v22.0

One always wonders ... it's probably my fault somewhere. Still Diggin' :-)

Software RAID Failure

Did I mention holiday? A couple of days ago I got an email with subject line :

Fail event on /dev/md/2:shuttle

That's a disk failure with a RAID mirror I have in a system (where I normally stage the blog). Something to look forward to fixing when I get home. Hopefully the remaining disk stays well, always a slight concern with something like this.

On top of this issue, I have smart complaining on another system about "unreadable sectors" but this is something I've been momitoring for a couple of months, the number not increasing for now. RAID is not a backup, but it helps mitigate hardware failures.

A quick followup to this. The 500GB 2.5" SATA disk I was going to use as a replacement might not be healthy itself. I did a quick smartctl health check on it and it spat our some warnings :

==> WARNING: These drives may corrupt large files,

see the following web pages for details:

http://knowledge.seagate.com/articles/en_US/FAQ/215451en

http://forums.seagate.com/t5/Momentus-XT-Momentus-Momentus/Momentus-XT-corrupting-large-files-Linux/td-p/109008

http://superuser.com/questions/313447/seagate-momentus-xt-corrupting-files-linux-and-mac

I didn't know smartmontools did this. What a great feature. So, looks like I need to flash the Seagate firmware.

Update

Disk firmware updated, replaced in RAID and syncing the mirror ...

Laptop Random Hibernations

I'm trying Debian Testing (Jessie) on my Thinkpad x220 and it's generally been fine. In fact, in many ways it's the best and fastest version yet (and the laptop's pretty good as well)

However, I've had it decide to hibernate itself when I'm not looking. This wouldn't be so bad except it has a problem resuming (libgcrypt message, similar to bug 724275), so this turns into a hard reset. As usual, a number of places I could look to solve this (initramfs, acpi, uswsusp etc.) and I'll see if I can find some time and do some debugging. Chasing this sort of issue is particularly tough because of the need for rebooting/hibernating to test things.

I was going to followup a post on the Debian Users web forum but it looks like my account has been "deactivated" manually by an admin and I can't re-activate or re-register (username in use!). A large bit of friction having to send a mail to the admins about it and a bit of a crappy policy if you ask me ...

So ...

Maybe I have too many computers, and too many computer related activities going on. I'm juggling different virtual machines running different versions of Debian, doing different things and occasionally thinking about synchronisation. Silly things such as whether to run the development system VM on KVM or switch to VirtualBox? If I use both, best ways to sync them up? Converting raw KVM disk to a VDI etc.

No wonder the odds increase that I end up in pain sometimes. The aim is always to get things sorted and arranged in such a way that I can actually do some work, or something worthwhile. Not spend all day fixing or configuring things before managing any of that!

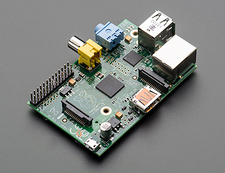

I've been setting up my Raspberry Pi again. Last time I used it to monitor a server room door by taking webcam pictures and emailing them to me. Now I'm considering if it would work as a WiFi access point.

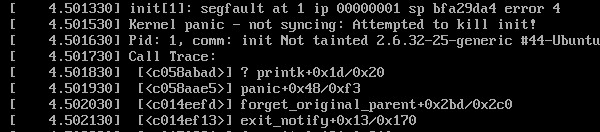

Downloading and imaging the latest Raspbian to an SD card worked fine but I kept having problems. Works fine initially but the next morning I'd see massive filesystem corruption (segmentation fault, signal 11 etc. just doing an ls etc.). Maybe a bad SD card? So, try another: same thing the next day. Maybe my Pi is broken?

Turns out to be a poor power supply. I'd plugged it into a micro-USB phone charger that just isn't giving out the right power (needs a good 5V). Try a better supply and it seems fine now. This shows how critical a good power supply is!

How critical? Well, the wrong type can kill you, so be careful. A while ago, a Chinese iPhone user was supposedly electrocuted and died, probably using a poor (and fake) charger. For a detailed look at this, see Ken Shirriff's blog.

A while ago, I switched my Linux desktops from Gnome 3 to XFCE. I've now switched to KDE. Not because there's anything wrong with XFCE: I just wanted a change.

This is a big turnaround for me however because it is not that long ago that I would have sworn never to use KDE. I last tried it over 10 years ago and thought it had a lot of very rough edges, plus felt and looked "cheap". Alongside too many half-baked "K" applications and a ridiculous number of configuration parameters and settings, a significant part of it didn't work very well.

It's completely different now and the Debian Wheezy version I'm using (KDE 4.8.4, a few versions out of date now) not only looks fantastic but almost all of it works as expected.

I've had it crash once whilst messing with a particular desktop setting, but it restarted itself automatically and carried on from where it left off. In comparison, I booted Gnome 3 a few weeks ago, just to have another look, played with the ALT+TAB/ALT+` switching for a few seconds and it crashed the desktop. Unlike KDE, it didn't recover but logged you out, losing everything.

The desktop still has a lot of settings to browse but the control panel makes sense and it all seems properly integrated. There are still a few areas I don't properly understand, KDE activities being the main one, but you can ignore them until you feel like having a better look. Virtual desktops work as usual.

All in all, I'm really liking KDE. The developers are doing a wonderful job and the QT toolkit gets better and better. Definitely worth a look.

For people that want to have a play themselves, you can download a KDE live DVD (e.g. Suse do KDE well), boot from it and play without affecting or changing anything on your PC.